The Five Senses of Robotics

The 10 Innovative Robotics Solution Providers

Last week, General Motors stepped up its autonomous car effort by augmenting its artificial intelligence unit, Cruise Automation, with greater perception capabilities through the acquisition of LIDAR (Light Imaging, Detection, And Ranging) technology company Strobe. Cruise was purchased with great fanfare last year by GM for a billion dollars. Strobe’s unique value proposition is shrinking its optical arrays to the size of microchip, thereby substantially reducing costs of a traditionally expensive sensor that is critical for autonomous vehicles measuring the distances of objects on the road. Cruise CEO Kyle Vogt wrote last week on Medium that “Strobe’s new chip-scale LIDAR technology will significantly enhance the capabilities of our self-driving cars. But perhaps more importantly, by collapsing the entire sensor down to a single chip, we’ll reduce the cost of each LIDAR on our self-driving cars by 99%.”

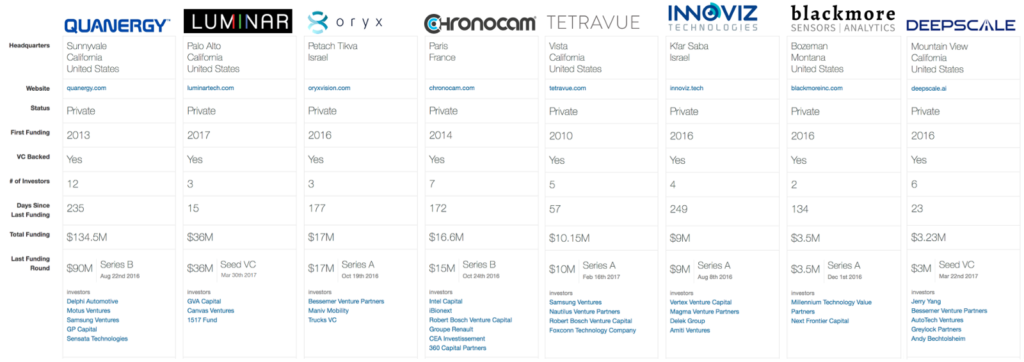

GM is not the first Detroit automaker aiming to reduce the costs of sensors on the road; last year Ford invested $150 million in Velodyne, the leading LIDAR company on the market. Velodyne is best known for its rotation sensor that is often mistaken for a siren on top of the car. In describing the transaction, Raj Nair, Ford’s Executive Vice President, Product Development and Chief Technical Officer, said “From the very beginning of our autonomous vehicle program, we saw LIDAR as a key enabler due to its sensing capabilities and how it complements radar and cameras. Ford has a long-standing relationship with Velodyne and our investment is a clear sign of our commitment to making autonomous vehicles available for consumers around the world.” As the race heats up for competing perception technologies, LIDAR startups is already a crowded field with eight other companies (below) competing to become the standard vision for autonomous driving.

Across the ocean, Dr. Hossam Haick of Technion-Israel Institute of Technology has built an intelligent olfactory system that can diagnosis cancer. Dr. Haick explains, “My college roommate had leukemia, and it made me want to see whether a sensor could be used for treatment. But then I realized early diagnosis could be as important as treatment itself.” Using an array of sensors composed of “gold nanoparticles or carbon nanotube” patients breath into a tube that detects cancer biomarkers through smell. “We send all the signals to a computer, and it will translate the odor into a signature that connects it to the disease we exposed to it,” says Dr. Haick. Last December, Haick’s AI reported an 86% accuracy in predicting cancers in more than 1,400 subjects in 17 countries. The accuracy increased with use of its neural network in specific disease cases. Haick’s machine could one day have better olfactory senses than canines, which have been proven to be able sniff out cancer.

When writing this post on robotic senses, I had several conversations with Alexa and I am always impressed with her auditory processing skills. It seems that the only area in which humans will exceed robots will be taste; however I am reminded of Dr. Hod Lipson’s food printer. As I watched Lipson’s concept video of the machine squirting, layering, pasting and even cooking something that resembled Willie Wonka’s “Everlasting Gobstopper,” I sat back in his Creative Machines Lab realizing that Sci-Fi is no longer fiction.

Want to know more about LIDAR technology and self-driving systems? Join RobotLab’s next forum on “The Future of Autonomous Cars” with Steve Girsky formerly of General Motors — November 29th @ 6pm, WeWork Grand Central NYC, RSVP.